Linux command reference

Notes and references on various commands on Linux systems.

aptgitrsyncffmpeg- Resize images and remove metadata

- Reading and changing monitor settings

- OpenSSL

- Basic

certbotusage - Stream USB camera with VLC without transcoding

- Clean desktop on Linux systems

- Clean up old versions from

snap - Close an LVM

- Trigger VueScan to scan

- GPG passphrase prompt in terminal

- Reboot into UEFI/BIOS/firmware settings

haproxy- Networking

- Sorting

psoutput - Docker

apt

apt-key is deprecated, how to add repos and keys

This (now) familiar error:

$ sudo apt update

W: GPG error: http://download.example.com/debian bookworm InRelease: The following signatures couldn't be verified because the public key is not available: NO_PUBKEY 1140AF8F639E0C39

E: The repository 'http://download.example.com/debian bookworm InRelease' is not signed.

N: Updating from such a repository can't be done securely, and is therefore disabled by default.

N: See apt-secure(8) manpage for repository creation and user configuration details.

The path where keys are stored has changed, and you need a signed-by

attribute in the repo .list file referencing the key's path.

TLDR

- New path for key files, use

/usr/share/keyrings.- Both

.asc(ASCII-armor).gpg(binary) files work.

- Both

- Add a

signed-byto the.listfile, referencing the filename:deb [arch=amd64 signed-by=/usr/share/keyrings/key.asc] http://download.example.com/debian trixie main - Remove old keys from

apt-key

The keys can be placed in:

/usr/share/keyrings: Requiressigned-byin repo.listfile, both binary.gpgand ASCII-armed.ascfiles are fine./etc/apt/keyrings: Requiressigned-byin repo.listfile, both.gpgand.ascfiles are fine./etc/apt/trusted.gpg.d: Implicitly trusted keys, ASCII-armed.ascfiles.

Retrieve the key from a keyserver

If you don't have a file with the GPG key, you can retrieve it from a keyserver and export an ASCII-armored copy:

$ gpg --keyserver hkp://keyserver.ubuntu.com:80 --recv-keys ${key_id}

$ gpg --armor --export ${key_id} > key.asc

Depending on your gpg.conf, you might not need to set --keyserver.

De-armoring the key

Convert an .asc ASCII-armored file to a binary formatted .gpg file:

$ file key.asc

key.asc: OpenPGP Public Key Version 4, Created Mon Nov 9 06:59:32 2020, RSA (Encrypt or Sign, 4096 bits); User ID; Signature; OpenPGP Certificate

$ cat key.asc | gpg --dearmor > key.gpg

$ file key.gpg

key.gpg: OpenPGP Public Key Version 4, Created Mon Nov 9 06:59:32 2020, RSA (Encrypt or Sign, 4096 bits); User ID; Signature; OpenPGP Certificate

This step isn't really needed if you use the /usr/share/keyrings path, Debian just recommends

it for compatibility reasons.

Add the repo

Move the de-armored public key into place and ensure correct ownership:

$ sudo cp key.asc /usr/share/keyrings/

$ sudo chown root:root /usr/share/keyrings/key.asc

$ sudo chown 644 /usr/share/key.asc

I prefer using a template task in Ansible to manage the .list files:

deb [arch={{ ansible_architecture }} signed-by=/usr/share/keyrings/key.asc] http://download.example.com/{{ ansible_distribution | lower }} {{ ansible_distribution_release }} main

Which should look something like this:

$ cat /etc/apt/sources.list.d/example.list

deb [arch=amd64 signed-by=/usr/share/keyrings/key.asc] http://download.example.com/debian trixie main

With signed-by referencing where the file exists on your file system.

Alternative ways to manage the repo

Alternative ways to manage the repo

You can add the repos .list file with the apt_repository module:

- name: add apt repo

apt_repository:

repo: "deb [arch={{ ansible_architecture }} signed-by=/usr/share/keyrings/key.asc] http://download.example.com/{{ ansible_distribution | lower }} {{ ansible_distribution_release }} main"

#repo: "deb [arch={{ ansible_architecture }}] http://download.example.om/{{ ansible_distribution | lower }} {{ ansible_distribution_release }} main"

state: present

update_cache: false

filename: /etc/apt/sources.list.d/example

Alternatively use the deb822_repository module:

- name: add your repo

deb822_repository:

name: example

types: deb

uris: http://download.example.com/{{ ansible_distribution | lower }}

suites: "{{ ansible_distribution_release }}"

components: stable

architectures: amd64

signed_by: /usr/share/keyrings/key.gpg

Or even more alternatively instead of .list file, create a .sources file

like /etc/apt/sources.list.d/example.sources:

Types: deb

URIs: http://download.example.com

Suites: {{ ansible_distribution | lower }}

Components: main

Signed-By: /usr/share/keyrings/key.gpg

Cleanup

Then clean up the key from apt-key if it was there already. List existing

keys with

$ sudo apt-get list

Debian/Ubuntu keys are still there for compatibility reasons, so grep them

out:

$ sudo apt-get list | grep uid | grep -vi debian

If that turns up any keys, delete them:

$ sudo apt-key del support@example.com.

Now you can apt update and apt install and etc.

Repository changed its 'Suite' value. This must be accepted explicitly

If you find yourself on some old Debian-based system you'd forgotten about

and try to apt install something and get this error message:

$ sudo apt update

Reading package lists... Done

E: Repository 'http://deb.debian.org/debian buster InRelease' changed its 'Suite' value from 'stable' to 'oldoldstable'

N: This must be accepted explicitly before updates for this repository can be applied. See apt-secure(8) manpage for details.

The man page has a lot of dense info, so here's the command:

$ sudo apt-get --allow-releaseinfo-change update

Now you can update your system (and hopefully not forget about it again :).

References

"apt-key is deprecated in Debian/Ubuntu - how to fix in Ansible", Jeff Geerling

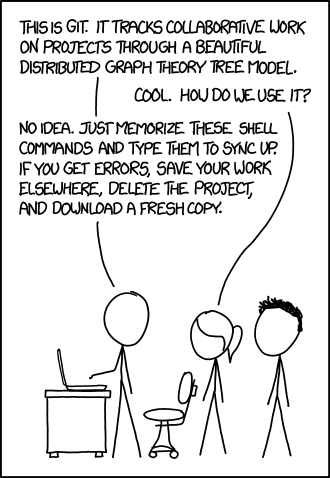

git

Submodules

By default submodules are added at fixed commits. Now git can set submodules to

track branches.5

Adding a new submodule tracking $branch:6

$ git submodule add -b $branch $url;

$ git submodule update --remote

Change an existing submodule to track $branch:7

$ # change the submodule defintion in the parent repo

$ git config -f .gitmodules submodule.${submodule}.branch $branch

$ # and make sure the submodule itself is actually at that branch

$ cd $submodule

$ git checkout $branch

$ git branch -u origin/$branch $branch

Erase file from history

It's a good idea to take a note of the commit hashes the file occurs in:

$ git log -S"${filename}" --oneline

Erase $filename from the Git history:8

$ git filter-branch --force --index-filter \

"git rm --cached --ignore-unmatch ${filename}" \

--prune-empty --tag-name-filter cat -- --all

It's smart to search the commit history again for the file afterwards. When you are done, push to all remote branches (on all remotes):

$ git push origin --force --all

Don't forget remotes other than origin (if you have them). Note that this

rewrites the Git history, altering commit hashes.

References

"Force Git modules to always stay current", Stack Overflow

"How can I specify a branch/tag when adding a Git submodule?", answer by vogella. Stack Overflow

"How can I specify a branch/tag when adding a Git submodule?", answer by VonC. Stack Overflow

"Permanently removing a file from git history", answer by Simba. Stack Overflow

rsync

Sync and preserve file attributes

This preserves file attributes, and mirrors the source and destination:

$ rsync -rahS \

--numeric-ids \

--info=progress2 \

--delete-after \

--exclude="lost+found" \

--exclude="*.tmp" \

$source $destination

Argument:s9

-h/--human-readable: Output numbers in human-readable format.-S/--sparse: Turn sequences of null bytes into sparse blocks-a: Archive mode,10 equivalent to-rlptgoD:-r/--recursive: Recurse into directories.-l/--links: Preserve symlinks (copy them as symlinks).-p/--perms: Preserve permissions.-t/--times: Preserve modification times (file attributes).-g/--group: Preserve group ownership.-o/--owner: Preserver user ownership (onlyrootcanchownfiles).-D: Same as--devices --specails:--devices: Preserve device files (requiresroot).--specials: Preserve specxial files.

--numeric-ids: Don't map uid/gid values by names of users/groups, preserve them as-is.--info=progress2: Outputs statistics based on the whole transfer.--delete-after: receiver deletes after transfer complets (not during).

Sometimes useful:

--remove-source-files: Sender removes synchronized files (use with caution).

References

man rsync

"What is archive mode in rsync?", answer by Andew. Server Fault.

ffmpeg

Converting DVD to file

Convert a .VOB from a DVD to MP4:

$ ffmpeg \

-i VTS_01_2.VOB \

-b:v 1500k -r 30 -vcodec h264 -strict -2 -acodec aac -ar 44100 \

-f mp4 VTS_01_2.mp4

Writes VTS_01_2.VOB as VTS_01_2.mp4.

References

"Avconv/FFmpeg - VOB to mp4", answer by llogan. StackOverflow

"How to convert DVD to mp4 with ffmpeg", DEV Community

Resize images and remove metadata

Inspect and strip metadata with exiftool:

$ sudo dnf install perl-Image-ExifTool

$ exiftool ${imagefile}

$ exiftool -all= -overwrite_original ${imagefile}

Strip metadata with mogrify, works for most

formats (provided by ImageMagick):

$ sudo dnf install imagemagick

$ mogrify -strip ${imagefile}

Resize a .jpg image with mogrify:

$ mogrify -format jpg -resize 422x316 image.jpg

$ mogrify -format jpg -resize "50%" ${imagefile}

Arguments to mogrify:

-strip: remove metadata-format $format: output format-resize $geometry/-resize "N%: output size

Optimizing .png file sizes and remove metadata with optipng:

$ sudo dnf install optipng

$ optipng -strip all image.png

Arguments for pngoptim:

-strip all: remove metadata-o: optimization level, defaults to2.

Optimizing .jpg files and remove metadata with jpegoptim:

$ sudo dnf install jpegoptim

$ jpegoptim --strip-all image.jpg

$ jpegoptim --strip-all --max 85 ./*.jpg

Arguments for jpegoptim:

--strip-all/-s: strip all metadata--max/-m: quality factor,0-100.

References

"How to strip metadata from image files", Unix & Linux Stack Exchange

Reading and changing monitor settings

Modern monitors have a Virtual Control Panel (VCP), that can be interacted

with over an I²C bus with ddcutil.14

$ sudo dnf install ddcutil

$ sudo apt install ddcutil

The ddcutil package is is available in the default

repos on Fedora, Debian and Ubuntu.

Monitor settings

Show basic information about your montior:

$ sudo ddcutil detect

Display 1

I2C bus: /dev/i2c-13

DRM connector: card1-DP-1

EDID synopsis:

Mfg id: BNQ - UNK

Model: BenQ PD3205U

Product code:

Serial number:

Binary serial number:

Manufacture year:

VCP version: 2.2

Show all known settings and values:

$ sudo ddcutil getvcp known

VCP code 0x10 (Brightness): current value = 42, max value = 100

VCP code 0x12 (Contrast ): current value = 50, max value = 100

Show a specific setting:

$ sudo ddcutil getvcp 0x10

VCP code 0x10 (Brightness): current value = 42, max value = 100

Settings can then be modified with setvcp.

$ sudo ddcvcp setvcp 0x10 50

Sets the brightness of the display to 50%.

Inputs

If your monitor has multiple inputs, ddcutil can switch between them.

First list the inputs and their VCP id's:

$ sudo ddcutil capabilities

Feature: 60 (Input Source)

Values:

0f: DisplayPort-1

11: HDMI-1

13: USB-C

The feature-code for input sources is 60. Use setvcp to switch

input source:

$ sudo ddcutil setvcp 60 0x11

This switches the input source on the monitor to the HDMI-1 input.

References

rockowitz/ddcutil, GitHub

OpenSSL

Inspect a certificate

Read a certificate from a .pem or .crt file:16

$ openssl x509 -in ${path} -noout -text

Get the fingerprint of a certificate with -fingerprint:

$ openssl x509 -in ${path} -noout -fingerprint -sha1

OpenSSL defaults to the SHA1 fingerprint. Use -sha512, -sha256 or -md5 etc., to

get fingeprints for other hash algorithms.

Retrieve a certificate over TCP

Retrieve a SSL certificate over TCP with s_client,17

$ openssl s_client -connect ${host}:${port} </dev/null 2>/dev/null

This can then be piped into openssl x509 with -in /dev/stdin:

$ openssl s_client -connect ${host}:${port} </dev/null 2>/dev/null |

openssl x509 -in /dev/stdin -noout -fingerprint

OpenSSL performs the TLS handshake and prints the SSL certificate to stdout:

MySQL

Fetch the server certificate from MariaDB/MySQL18:

$ openssl s_client -starttls mysql -connect ${host}:3306

MariaDB/MySQL support requires OpenSSL >=1.1.1 (released in 2018).

References

"Verifying a SSL certificate's fingerprint?", Unix & Linux Stack Exchange

"Get certificate fingerprint of HTTPS server from command line?", Stack Overflow

"Can I use openssl s_client to retrieve the CA certificate for MySQL?", answer by yonran. Stack Overflow

"How to get the Root CA Certificate Fingerprint using openssl", Stack Overflow

Basic certbot usage

Create (request) a new cert for ${name}:

$ certbot certonly -d ${name}

The new cert exists in the certbot-managed dir /etc/letsencrypt/live:

$ ls -d /etc/letsencrypt/live/${name}

/etc/letsencrypt/live/${name}

If you need to delete/revoke a cert for ${name}:

$ certbot delete --cert-name ${name}

Stream USB camera with VLC without transcoding

$ cvlc v4l2:///dev/video0 --sout '#standard{access=http,mux=ts,dst=:8080}'

Clean desktop on Linux systems

Create an empty dir and make it unwritable:

$ mkdir ~/.empty

$ chattr -fR +i ~/.empty

$ lsattr -d ~/.empty

----i---------e------- ~/.empty

Change XDG_DESKTOP_DIR in ~/.config/user-dirs.dirs, and KDE Plasma you

can also set ~/.config/plasma-org.kde.plasma.desktop-appletsrc:

url=/home/${USER}/.empty/

Now the desktop won't clutter

Clean up old versions from snap

The snap packages can take up a lot of space

LANG=C snap list --all | awk '/disabled/{print $1, $3}' |

while read snapname revision; do

snap remove "$snapname" --revision="$revision"

done

This removes old versions of installed "packages".

Close an LVM

$ sudo lvchange -an /dev/${vol_group_name}/${vol_name}

Closes a LVM (logical) volume, for example so that a USB drive can be luksClosed.

Trigger VueScan to scan

$ xdotool windowactivate --sync $(xdotool search --name "^VueScan.*${titlebar_part}") && sleep 1 && xdotool key KP_Enter

Super hacky way to trigger VueScan, as long as the regex

^VueScan.*${titlebar_part} is in the titlebar. Works for other programs as

well, it's just matching the titlebar and making a keypress with xdotool.

GPG passphrase prompt in terminal

export GPG_TTY=$(tty)

This will cause GPG to prompt for passphrase with an ncurses-interface in the terminal, instead of trying to use KDE/GNOME keychain, making it work over ssh as well.

Reboot into UEFI/BIOS/firmware settings

$ sudo systemctl reboot --firmware-setup

haproxy

Various summary info20:

$ echo "show info;show stat;show table" | socat /var/lib/haproxy/stats stdio

Cleanly print stats for a few columns:

$ echo "show stat" | socat /var/lib/haproxy/stats stdio | awk 'BEGIN{FS=","} {sub(/^#\ */, "", $0); print $1,$2,$9,$10}' | column -t

List all available column names:

$ echo "show stat" | socat /var/lib/haproxy/stats stdio | awk 'BEGIN {FS=","} NR == 1 {sub(/^#\ */, "", $0); for (i=1; i < NF; i++) { print "$"i" = "$i }}'

More in HAProxy documentaion.

Networking

Handy output for tcpdump:20

$ tcpdump -nlei $interface

veth interfaces

See what interface is paired with a veth interface:20

$ ethtool -S $vethDevice

NIC statistics:

peer_ifindex: 255

rx_queue_0_xdp_packets: 0

rx_queue_0_xdp_bytes: 0

rx_queue_0_drops: 0

rx_queue_0_xdp_redirect: 0

rx_queue_0_xdp_drops: 0

rx_queue_0_xdp_tx: 0

rx_queue_0_xdp_tx_errors: 0

tx_queue_0_xdp_xmit: 0

tx_queue_0_xdp_xmit_errors: 0

$ ethtool -S vethc31a9a9 | grep "peer_ifindex" | awk -F': ' '{ print $2 }'

255

The peer_ifindex (peer interface index) should match the

entry number in the output of ip link or ip addr.

Namespaces

Network namepaces are also a file:20

$ ls /var/run/netns

So finding the inodes of a process's network namespace is easy:

$ readlink /proc/{0..9}*/ns/net | sort | uniq

$ stat /var/run/netns/*

Misc Linux notes, Ziggit's notes

Sorting ps output

Sort by memory:21

$ ps au --sort=-%mem

$ ps auk-%mem

Other parameters for --sort can also be used.

Generic linux notes, Ziggit's notes

Docker

Identify which Docker container owns which overlay directory

To identify which container a dir in /var/lib/docker/overlay2 belongs to:

$ docker inspect $(docker ps -qa) |

jq -r 'map([.Name, .GraphDriver.Data.MergedDir])

| .[]

| "\(.[1]): \(.[0][1:])"'

Outputs a list of overlay directories and container names.

Images

List all dangling images:22

$ docker images -f dangling=true

"How do I identify which container owns which overlay directory?", answer by Matthew. Stack Overflow